Driverless cars might just bring about the biggest societal revolution since, well, the industrial revolution, and it appears that everyone’s getting in on it. But how do they see?

Object Detection technologies for self-driving cars

The 90s had a thing for fantasizing over cars that drive by themselves. People called it crazy back then, but turns out they predicted the future pretty well. From rumors of the Apple self-driving car to real-world, driverless car applications from companies like Lyft and Uber, autonomous vehicles are poised to become a staple in our automotive industry in just a few years from now.

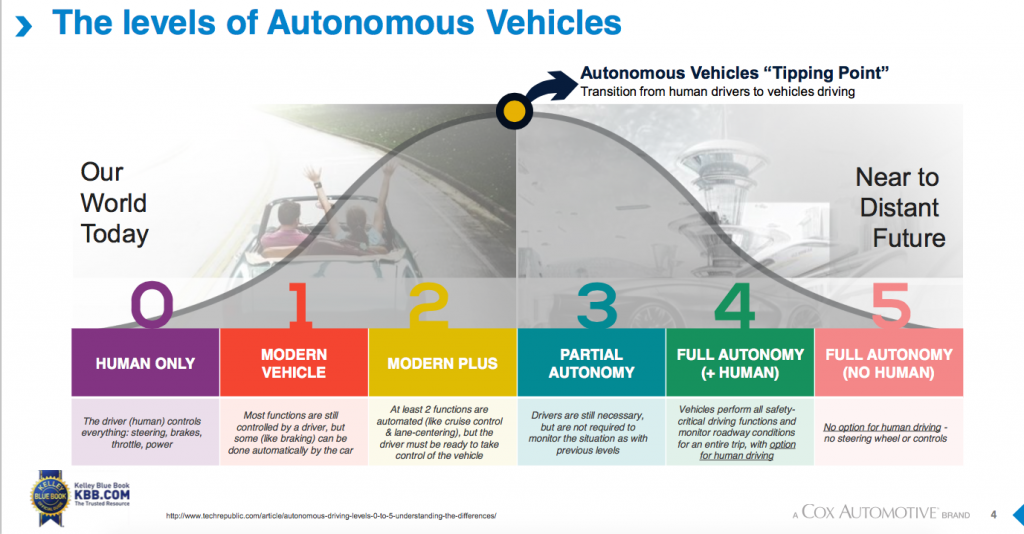

To begin with, “What are self-driving cars?” Although current Advanced Driver-Assistance Systems (ADAS) provide important safety functions such as pre-collision warnings, steering assistance, and automatic braking, self-driving vehicles take these technologies to the next level by completely removing the need for a driver i.e level 5 of autonomy.

How do they work?

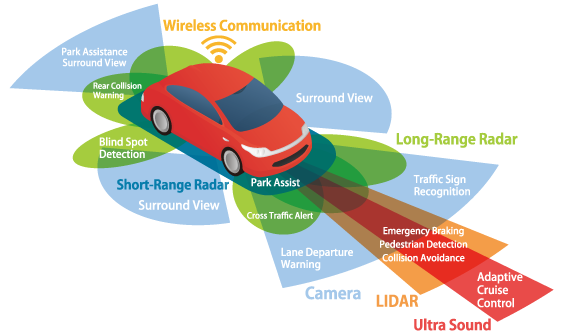

Self-driving vehicles employ a wide range of technologies like radar, cameras, ultrasound, and radio antennas to navigate safely on our roads.

Technologies for object detection:

- Ultrasonic Sensors: with an operating range of about 2 m, are used for car parking assistance.

- Image Recognition: Cameras are used for visual detection and identification of elements on the road to a high degree of specificity because of the great detail that can be extracted from pictures and videos. They can be used to figure out where the lanes are, whether the traffic light is green or red and even identify the objects in the vehicle’s path. In this way, image recognition is important for traffic situation modeling, because the system can see the situation in the same way as a human driver. Today’s systems in market-available cars work with one or more cameras placed at the front side, backside, and wing mirrors of the vehicle. In case of a 2D camera, the system delivers a 2D image without depth information. Therefore they deliver information about object size and position, but only marginal data about class and type of the objects. The 3D cameras are able to deliver more information about the depth of field of an image. 3D information can be generated in different ways, e.g. by structured light, or by time-of-flight (ToF) technology. To enhance the possibilities of camera-based systems for cases with reduced light, infrared night vision systems are used. This technology represents a cost-effective way for object identification, but the sensing quality strongly depends on environmental aspects, e.g. weather and light reflection. Despite the rich data cameras provide, they cannot gauge an understanding of depth (in most cases) or the velocities of other elements on the road.

- Radar: Radar sensors measure the distance and relative velocity to objects precisely and with high accuracy. An important advantage of radar-based systems is their robust and reliable operation under different environmental conditions, e.g. weather, dust, and pollution. They have a long operating range of up to 250 m, but the delivered information about obstacle geometry is limited. Long-range radio detection and ranging are used in automated cruise control functions to identify other cars on road. Front and rear end mid-range radar systems with a range from 30 to about 150 m are used in emergency brake assistants, collision detection, and highway assistants (supporting automated overtaking processes, lane change assistance, and blind spot detection). Drawbacks include higher costs in comparison with camera-based systems and a relatively coarse representation of objects that are based on a limited number of radar lobes.

- LiDAR: Seen as the most powerful, but most expensive technology for object recognition. A fast rotating laser sensor provides several millions of data points per second, enabling the creation of detailed 3D maps of both surrounding objects and environment, considering an operating range of more than 100 m. . Especially the mapping of environments like area topology, buildings, and objects aside the road is important for autonomous driving functions. This system consists of 5 components – laser scanner, GPS, scanner (to measure the angle at which each laser pulse was fired), Internal Measurement Unit (IMU), and clock. A key advantage of the technology in comparison with camera-based systems is that the function is not influenced by ambient lighting conditions, because LiDAR emits laser light. On the other hand, cameras provide a higher resolution and are able to detect colors.

Here, we hit a snag! We have seen that no one sensor can provide all the information required to make an effective decision. The solution? Yes, bingo! The car combines the data from all the sensors to have the best information. This is called Sensor Fusion.

These sensor technologies are used according to their ranges of application and combined with the global positioning systems (GPS)

Once this is done, it’s time for the hard part: identifying what’s what. Is it a dog or a child crossing the road? Is it a leaf or a pigeon? The real work here relies on machine learning—the art of teaching a robot that those cluster of dots there, is a little girl crossing the road and that this swath of pixels here is a small puppy. But once it knows how to see, the question of how to drive gets easy: Don’t hit either one of them.

– By Poorvi S H M, Third Year Department of Electronics and Communication Engineering